AI Crawl Optimization — Definition

AI Crawl Optimization is the technical process of improving a website’s architecture, accessibility, semantic structure, and machine-readable signals so AI crawlers can efficiently discover, interpret, and prioritize its content for indexing and visibility across AI-driven search systems.

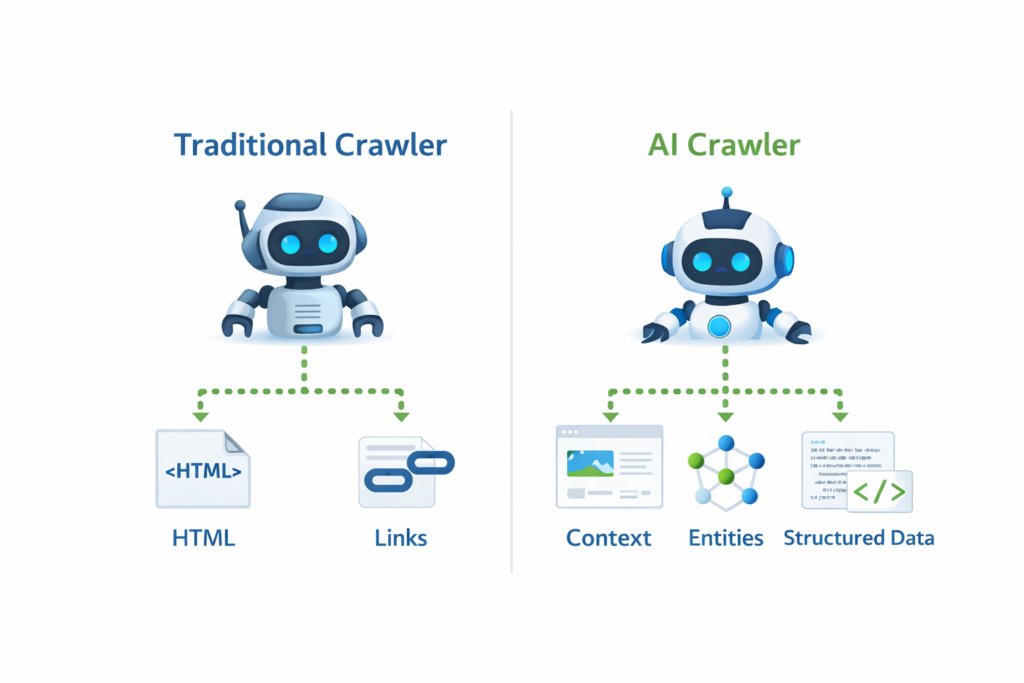

Unlike traditional crawling, which mainly parses links and HTML markup, AI crawler optimization focuses on meaning extraction, contextual relevance, structured data interpretation, and entity relationships. This makes AI crawlability a foundational requirement for modern search visibility.

Why AI Crawl Optimization Matters Now

Search ecosystems have evolved from simple link discovery into intelligent interpretation environments. AI systems increasingly evaluate content using contextual analysis rather than keyword matching. That shift means pages that are technically crawlable but semantically unclear may still fail to rank.

Sites optimized for AI indexing readiness typically achieve:

- faster discovery

- more consistent indexing

- improved semantic understanding

- higher trust signals

- stronger ranking eligibility

Websites that ignore AI crawl optimization often encounter indexing inconsistencies similar to those explained in Indexed Not Submitted in Sitemap (2026), where discovery and submission signals conflict.

How AI Crawlers Work

AI crawlers operate in layered evaluation stages:

Stage 1 — Access Validation

The crawler checks permissions through directives, headers, and server response behavior. Misconfigured directives can block access entirely. Proper configuration using a Robots.txt Generator guide 2026 ensures crawlers can reach important pages.

Stage 2 — Structural Mapping

The system maps internal links and hierarchy to understand page relationships. Logical architecture improves AI search visibility because crawlers can infer topical clusters.

Stage 3 — Semantic Interpretation

Instead of counting keywords, AI systems analyze meaning using entities, context signals, and structured markup.

Stage 4 — Priority Scoring

Pages are assigned crawl importance scores based on:

- topical authority

- internal link weight

- update frequency

- structured data presence

AI Crawlers vs Traditional Crawlers

| Feature | Traditional Crawlers | AI Crawlers |

|---|---|---|

| Primary Goal | discover URLs | understand content |

| Analysis | HTML parsing | semantic interpretation |

| Ranking Signal Focus | backlinks | contextual authority |

| Evaluation | page level | site-wide context |

| Rendering | optional | critical |

Understanding this difference is essential. Many site owners optimize only for classic bots and ignore AI crawler optimization signals, which limits visibility in modern search systems.

Core Signals That Improve AI Crawl Optimization

Professional SEO workflows prioritize these signals first:

1. Crawl Accessibility

If bots cannot access content, optimization is irrelevant. Always validate accessibility using tools such as a Google Index Checker and confirm server status responses are correct.

2. Structured Architecture

Clear internal linking improves interpretation. Topical structure directly influences crawler comprehension, which is why authority-focused strategies described in Topical Authority in SEO (2026) improve indexing reliability.

3. XML Sitemap Integrity

Accurate sitemaps accelerate discovery and prioritization. Submitting clean sitemap files through an XML Sitemap Generator ensures crawlers can locate important URLs quickly.

4. Rendering Compatibility

AI crawlers often render pages similarly to browsers. JavaScript-dependent content that fails rendering may be ignored or misunderstood.

5. Semantic Context Signals

Entities, structured headings, schema markup, and topical consistency help crawlers determine meaning rather than just reading text. Pages that provide clear semantic structure are easier for AI systems to interpret.

6. Internal Link Depth

Important pages should be reachable within three clicks. Deep pages receive lower crawl priority and may be skipped entirely.

7. Performance Signals

Slow server response reduces crawl frequency. Testing performance with a Page Speed Checker ensures that rendering speed does not block crawler processing.

AI Crawl Optimization vs Crawl Budget

These two concepts are frequently confused but represent different technical mechanisms.

- Crawl Budget determines how many pages a crawler visits.

- AI Crawl Optimization determines how effectively the crawler understands those pages.

A site may have a high crawl budget yet still perform poorly if semantic clarity is weak. For a deeper explanation of crawl resource allocation, review What Is Crawl Budget in SEO?

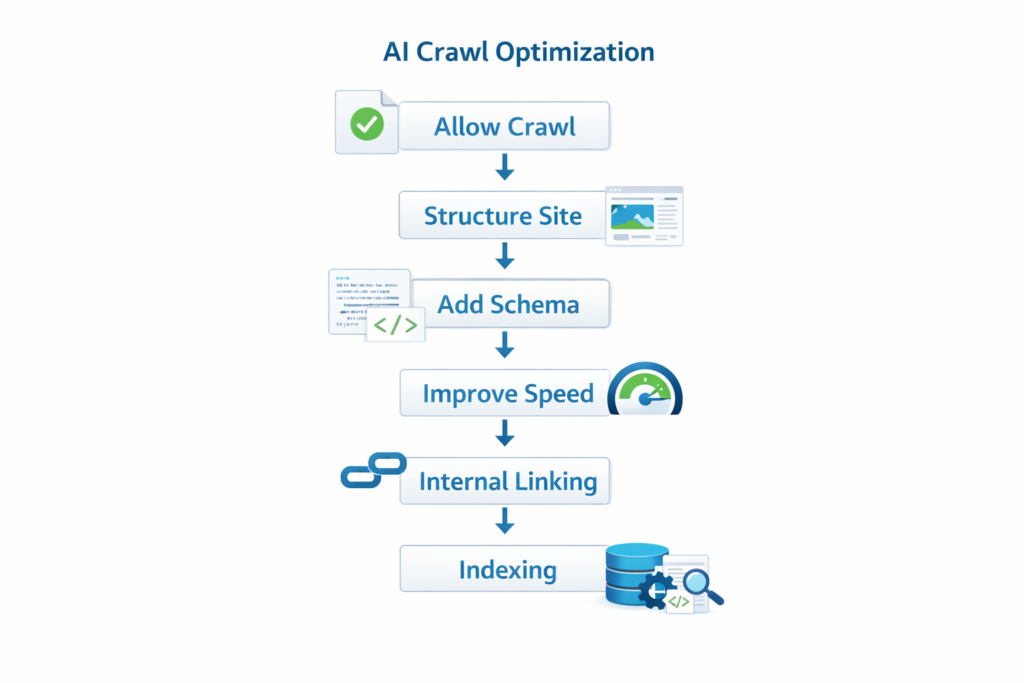

Technical Implementation Workflow

Professional optimization teams follow this sequence:

- allow AI bots through directives

- validate server response codes

- remove crawl barriers

- ensure sitemap accuracy

- fix broken links

- add structured data

- optimize internal linking

- improve rendering performance

Many structural issues can be detected early using diagnostics explained in Broken Link Finder Guide, which demonstrates how crawler pathways fail.

Common Mistakes That Block AI Crawlers

Most crawl failures originate from technical misconfigurations rather than content quality problems. The most frequent issues include:

- blocked bots in robots directives

- orphan pages without internal links

- duplicate URLs without canonical signals

- excessive redirect chains

- inconsistent sitemap data

- missing structured markup

- low semantic clarity

Fixing these issues alone often leads to indexing improvements without any additional content changes.

Relationship Between AI Crawl Optimization and Rankings

Crawling does not guarantee rankings, but ranking cannot happen without proper crawling. AI systems must first discover and interpret pages before evaluating their authority or relevance.

Research published by Google’s Search documentation confirms that crawl accessibility and structured signals directly influence how systems process and prioritize content.

Research published in Google’s official crawling and indexing documentation confirms that crawl accessibility, structured signals, and internal linking directly influence how search systems discover, interpret, and prioritize web content.

This means AI crawlability is not optional. It is a prerequisite for visibility.

Advanced Optimization Signals

Experienced SEO professionals enhance AI crawler optimization through deeper technical strategies:

Entity Reinforcement

Mention related concepts consistently across pages to strengthen semantic relationships.

Structured Content Blocks

Clear headings, definitions, lists, and FAQs help AI systems parse meaning efficiently.

Contextual Internal Linking

Linking related pages tells crawlers how topics connect. This is one of the strongest signals for semantic clarity.

Canonical Consistency

Canonical tags prevent duplicate interpretation signals.

Log File Analysis

Analyzing server logs reveals real crawler behavior, showing which pages bots actually visit.

Professional Strategy for Fast Results

The fastest way to improve AI indexing readiness is to prioritize technical clarity before content expansion.

High-impact optimization order

- crawl access validation

- sitemap accuracy

- internal linking

- structured signals

- performance speed

- semantic reinforcement

Sites that follow this order typically see indexing improvements within weeks.

Future of AI Crawl Optimization

AI search is evolving toward contextual engines that evaluate intent rather than keywords. This means optimization will increasingly depend on:

- entity clarity

- topical depth

- structured meaning

- contextual linking

Websites that adopt AI crawler optimization early gain a structural advantage because their content is easier for systems to interpret.

FAQ — AI Crawl Optimization

What is AI Crawl Optimization in simple terms?

It is the process of making a website easy for AI crawlers to access, understand, and prioritize.

Why is AI crawlability important?

If AI bots cannot interpret your content correctly, your pages may not appear in search results or AI-generated answers.

How is AI crawling different from traditional crawling?

Traditional crawlers focus on links and HTML, while AI crawlers analyze meaning, structure, and context.

How can I test AI crawler accessibility?

You can verify accessibility using index checkers, crawler diagnostics, and server response testing tools.

Does AI Crawl Optimization directly improve rankings?

No. It improves crawlability and understanding, which are prerequisites for ranking.